Powerful Statistical Analysis or Biased Propoganda?

Posted: August 19, 2012 Filed under: Measurement and Analytics | Tags: climate change, james hansen, normal distribution, standard deviation, standard normal distribution, statistical thinking 2 Comments It’s not often the standard normal distribution takes centre stage in a political debate but such is the case with climate change.

It’s not often the standard normal distribution takes centre stage in a political debate but such is the case with climate change.

In their article, “Bell Weather: A statistical analysis shows how things really are heating up,” The Economist highlights a recently published report by the controversial Dr. James Hansen, head of the Goddard Institute for Space Studies. As you’ll see below he and his colleagues have used our old friend the standard normal Z distribution on recent climate data to illustrate the likelihood of extreme warm weather is more likely as reflected by both a shift in the mean towards hotter temperatures as well as an increase in the standard deviation, making events beyond 3 sigma (that is 3 standard deviations) more likely.

Dr. Hansen is not without his critics. This blog is one of many that have critiques and found wanting the logic and use of data and statistics. As with many things, I think it is best when each of us looks at the situation and reach our own conclusions.

Here is a link to one of the critiques of the paper: http://climateswag.wordpress.com/2012/08/07/james-hansen-study-revisited-part-1/

Here is a link to the original paper so that you can assess it for yourselves: http://www.pnas.org/content/early/2012/07/30/1205276109.full.pdf+html?sid=296e42fe-6d58-4d54-8891-61424b9dcfb9

Here’s what The Economist had to say:

Are heatwaves more common than they used to be? That is the question addressed by James Hansen and his colleagues in a paper just published in the Proceedings of the National Academy of Sciences. Their conclusion is that they are—and the data they draw on do not even include the current scorcher that is drying up much of North America and threatening its harvest. The team’s method of presentation, however, has caused a stir among those who feel that scientific papers should be dispassionate in their delivery of the evidence. For the paper, interesting though the evidence it delivers is, is far from dispassionate.

Dr Hansen, who is head of the Goddard Institute for Space Studies, a branch of NASA that is based in New York, is a polemicist of the risks of man-made global warming. Despite his job running a government laboratory, he has managed to get himself arrested on three occasions for protesting against those he thinks are causing such climate change. He clearly states in the paper’s introduction that he was looking for a way of conveying his fears to a sceptical public.

Some of that scepticism is connected with the fact that although changes in the climate will inevitably result in changes in the weather, ascribing any given event—such as a local heatwave—to climate change is impossible. Dr Hansen has therefore tried to go beyond the study of individual causes by demonstrating that what was once unusual is now common.

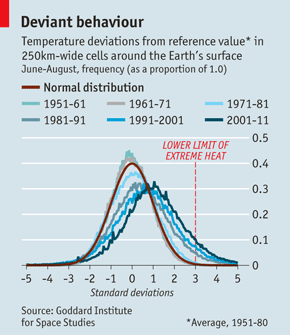

To do so, he and his colleagues took 60 years’ worth of data (the period from 1951 to 2011) from the Goddard Institute’s surface air-temperature analysis. This analysis divides the planet’s surface into cells 250km (about 150 miles) across and records the average temperature in each cell. The researchers broke their data into six decade-long blocks and compared those blocks’ statistical properties.

They looked in particular at the three months which constitute summer in the northern hemisphere (June, July and August). First, they created a reference value for each cell. This was its average temperature over those three months from 1951-80. Then they calculated how much the temperature in each cell deviated from the cell’s reference value in any given summer. That done, they plotted a series of curves, one for each decade, that showed how frequently each deviant value occurred.

Since small deviations are common and large ones are rare, the result of plotting data in this way is a curve shaped somewhat like the cross-section of a bell. Such distributions can be modelled by a mathematical function known as the normal distribution—or “bell curve”.

Whether based on data or a mathematical ideal, such a curve always has two parameters. These are its mean (the value of all of the data points added together and divided by the number of points; this is also the peak of an ideal curve) and its standard deviation, which measures how wide the bell is. The standard deviation is calculated from all of the individual deviations of the data points.

To see what was going on, Dr Hansen superimposed the actual curves for each decade from the fifties to the noughties on a normal distribution, which acted as a reference curve. To make all the curves comparable, he expressed the values of the actual deviations as fractions of a standard deviation, and their frequencies as proportions of their total number.

As the chart shows, there are two trends. First, the peaks of the data-based curves move right, over time, with respect to the reference curve. In other words, the average temperature is rising. Second, more recent curves are flatter. A flatter curve means a bigger standard deviation and a wider spread of results.

If the mean of each curve were the same, such flattening would imply both more cold periods and more hot ones. But because the mean is rising, the effect at the cold end of the curves is diminished, while that at the hot end is enhanced. The upshot is more hot periods of local weather.

Moreover, the bell-curve method makes it possible to say just how much more hot weather there is. Dr Hansen defined extreme conditions as those occurring more than three standard deviations from the mean of his reference curve. In that curve, this would be an eighth of a percent at each end, which is more or less the value in the curve for 1951-61. Nowadays, though, extreme conditions (or, at least, those that would have been considered extreme half a century ago) can be found at any given time in about 8% of the world.

Local weather patterns do, of course, have local causes. To that extent, they are accidental. But Dr Hansen’s analysis suggests that claims there is more hot weather around than there used to be have substance, too.

Nothing in his analysis speaks of the cause of that substance. That is deliberate. As he says in the paper, he wants the data to speak for themselves—though he is personally convinced that the cause is human-generated emissions of greenhouse gases such as carbon dioxide. But as the United States bakes in what may turn out to be a record heatwave, he hopes he might now persuade those for whom global warming is, as it were, on the back burner, to agree that it is real, and to think about the consequences.

WIthout going into the details, in my opinion, Hansen has demonstrated in the paper that in the recent years, it has been in general warmer than the referenced period. However, the paper has not established that the referenced period, while stable, is typical of the climate, say in the past several centuries. What happen if that referenced period is somewhat cooler than usual? or the climate is cyclic? The range of data for analysis is so important that it echoes very much one of the earlier articles published by Bruce. When looking at the data, one needs to look at the bigger picture, in this case, the much long time record of the climate.

The economist article was interesting. It ignores the primary criticism of Dr. Hansen’s study. He picked a very stable and very short time period. The rationale for that selection was weak Had he used different time periods his results would probably have been much different. Too many people get bogged down by the statistical analysis. I am reminded of an old saying about statistics …garbage in ….garbage out.